mirror of

https://github.com/huggingface/diffusers.git

synced 2026-01-27 17:22:53 +03:00

Merge branch 'main' of https://github.com/huggingface/diffusers

This commit is contained in:

74

README.md

74

README.md

@@ -1,29 +1,54 @@

|

||||

# Diffusers

|

||||

|

||||

## Definitions

|

||||

|

||||

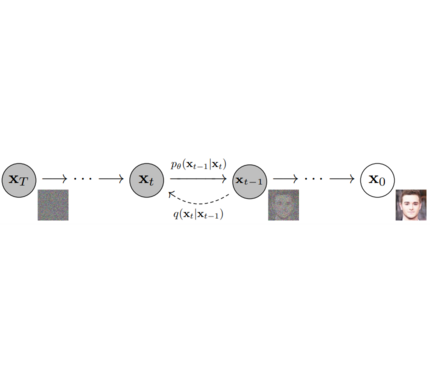

**Models**: Single neural network that models p_θ(x_t-1|x_t) and is trained to “denoise” to image

|

||||

*Examples: UNet, Conditioned UNet, 3D UNet, Transformer UNet*

|

||||

|

||||

|

||||

|

||||

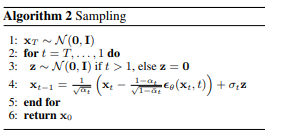

**Samplers**: Algorithm to *train* and *sample* from **Model**. Defines alpha and beta schedule, timesteps, etc..

|

||||

*Example: Vanilla DDPM, DDIM, PMLS, DEIN*

|

||||

|

||||

|

||||

|

||||

|

||||

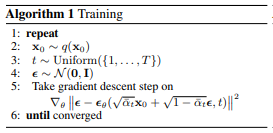

**Diffusion Pipeline**: End-to-end pipeline that includes multiple diffusion models, possible text encoders, CLIP

|

||||

*Example: GLIDE,CompVis/Latent-Diffusion, Imagen, DALL-E*

|

||||

|

||||

|

||||

|

||||

## Library structure:

|

||||

|

||||

```

|

||||

├── models

|

||||

│ ├── dalle2

|

||||

│ │ ├── modeling_dalle2.py

|

||||

│ │ ├── README.md

|

||||

│ │ └── run_dalle2.py

|

||||

│ ├── ddpm

|

||||

│ │ ├── modeling_ddpm.py

|

||||

│ │ ├── README.md

|

||||

│ │ └── run_ddpm.py

|

||||

│ ├── glide

|

||||

│ │ ├── modeling_glide.py

|

||||

│ │ ├── README.md

|

||||

│ │ └── run_dalle2.py

|

||||

│ ├── imagen

|

||||

│ │ ├── modeling_dalle2.py

|

||||

│ │ ├── README.md

|

||||

│ │ └── run_dalle2.py

|

||||

│ └── latent_diffusion

|

||||

│ ├── modeling_latent_diffusion.py

|

||||

│ ├── README.md

|

||||

│ └── run_latent_diffusion.py

|

||||

│ ├── audio

|

||||

│ │ └── fastdiff

|

||||

│ │ ├── modeling_fastdiff.py

|

||||

│ │ ├── README.md

|

||||

│ │ └── run_fastdiff.py

|

||||

│ └── vision

|

||||

│ ├── dalle2

|

||||

│ │ ├── modeling_dalle2.py

|

||||

│ │ ├── README.md

|

||||

│ │ └── run_dalle2.py

|

||||

│ ├── ddpm

|

||||

│ │ ├── modeling_ddpm.py

|

||||

│ │ ├── README.md

|

||||

│ │ └── run_ddpm.py

|

||||

│ ├── glide

|

||||

│ │ ├── modeling_glide.py

|

||||

│ │ ├── README.md

|

||||

│ │ └── run_dalle2.py

|

||||

│ ├── imagen

|

||||

│ │ ├── modeling_dalle2.py

|

||||

│ │ ├── README.md

|

||||

│ │ └── run_dalle2.py

|

||||

│ └── latent_diffusion

|

||||

│ ├── modeling_latent_diffusion.py

|

||||

│ ├── README.md

|

||||

│ └── run_latent_diffusion.py

|

||||

|

||||

├── src

|

||||

│ └── diffusers

|

||||

│ ├── configuration_utils.py

|

||||

@@ -38,7 +63,14 @@

|

||||

│ └── test_modeling_utils.py

|

||||

```

|

||||

|

||||

## Dummy Example

|

||||

## 1. `diffusers` as a central modular diffusion and sampler library

|

||||

|

||||

`diffusers` should be more modularized than `transformers` so that parts of it can be easily used in other libraries.

|

||||

It could become a central place for all kinds of models, samplers, training utils and processors required when using diffusion models in audio, vision, ...

|

||||

One should be able to save both models and samplers as well as load them from the Hub.

|

||||

|

||||

Example:

|

||||

|

||||

```python

|

||||

from diffusers import UNetModel, GaussianDiffusion

|

||||

import torch

|

||||

|

||||

Reference in New Issue

Block a user