You've already forked postgres_exporter

mirror of

https://github.com/prometheus-community/postgres_exporter.git

synced 2025-08-06 17:22:43 +03:00

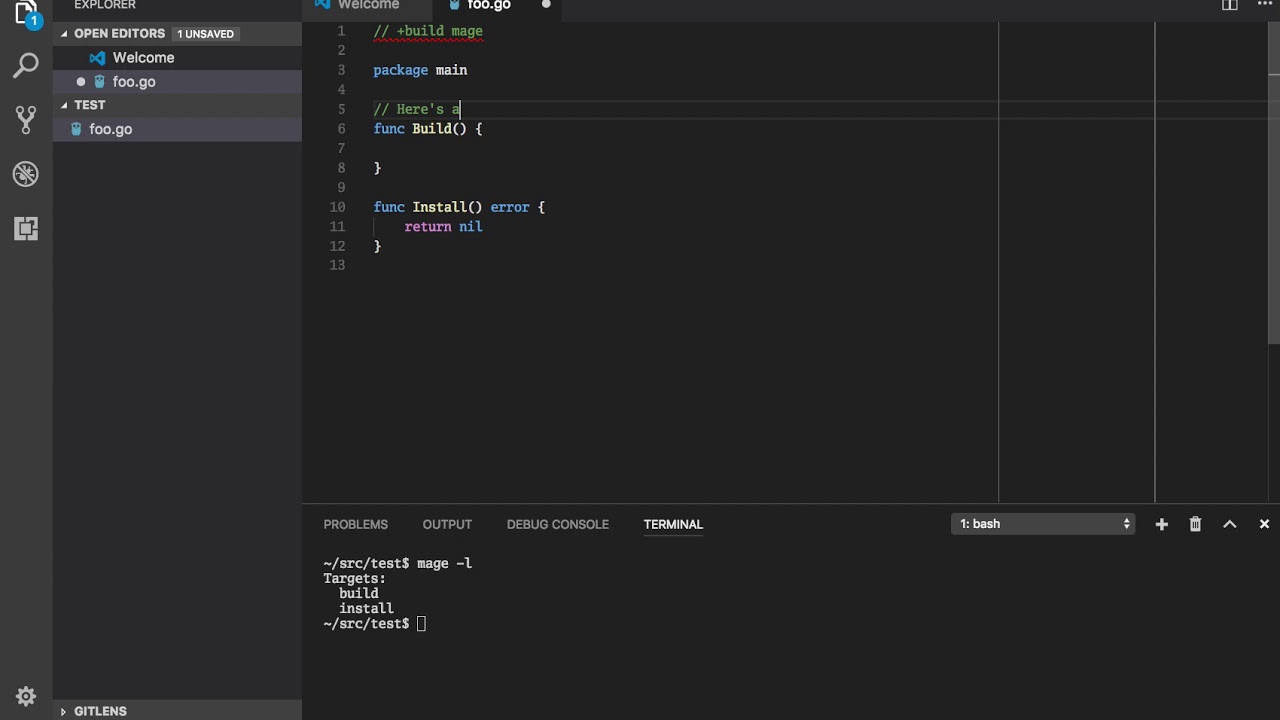

Refactor repository layout and convert build system to Mage.

This commit implements a massive refactor of the repository, and moves the build system over to use Mage (magefile.org) which should allow seamless building across multiple platforms.

This commit is contained in:

24

vendor/github.com/dsnet/compress/LICENSE.md

generated

vendored

Normal file

24

vendor/github.com/dsnet/compress/LICENSE.md

generated

vendored

Normal file

@@ -0,0 +1,24 @@

|

||||

Copyright © 2015, Joe Tsai and The Go Authors. All rights reserved.

|

||||

|

||||

Redistribution and use in source and binary forms, with or without

|

||||

modification, are permitted provided that the following conditions are met:

|

||||

|

||||

* Redistributions of source code must retain the above copyright notice, this

|

||||

list of conditions and the following disclaimer.

|

||||

* Redistributions in binary form must reproduce the above copyright notice,

|

||||

this list of conditions and the following disclaimer in the documentation and/or

|

||||

other materials provided with the distribution.

|

||||

* Neither the copyright holder nor the names of its contributors may be used to

|

||||

endorse or promote products derived from this software without specific prior

|

||||

written permission.

|

||||

|

||||

THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS "AS IS" AND

|

||||

ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED

|

||||

WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE ARE

|

||||

DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT HOLDER BE LIABLE FOR ANY

|

||||

DIRECT, INDIRECT, INCIDENTAL, SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES

|

||||

(INCLUDING, BUT NOT LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES;

|

||||

LOSS OF USE, DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND

|

||||

ON ANY THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY, OR TORT

|

||||

(INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE OF THIS

|

||||

SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

|

||||

75

vendor/github.com/dsnet/compress/README.md

generated

vendored

Normal file

75

vendor/github.com/dsnet/compress/README.md

generated

vendored

Normal file

@@ -0,0 +1,75 @@

|

||||

# Collection of compression libraries for Go #

|

||||

|

||||

[](https://godoc.org/github.com/dsnet/compress)

|

||||

[](https://travis-ci.org/dsnet/compress)

|

||||

[](https://goreportcard.com/report/github.com/dsnet/compress)

|

||||

|

||||

## Introduction ##

|

||||

|

||||

**NOTE: This library is in active development. As such, there are no guarantees about the stability of the API. The author reserves the right to arbitrarily break the API for any reason.**

|

||||

|

||||

This repository hosts a collection of compression related libraries. The goal of this project is to provide pure Go implementations for popular compression algorithms beyond what the Go standard library provides. The goals for these packages are as follows:

|

||||

* Maintainable: That the code remains well documented, well tested, readable, easy to maintain, and easy to verify that it conforms to the specification for the format being implemented.

|

||||

* Performant: To be able to compress and decompress within at least 80% of the rates that the C implementations are able to achieve.

|

||||

* Flexible: That the code provides low-level and fine granularity control over the compression streams similar to what the C APIs would provide.

|

||||

|

||||

Of these three, the first objective is often at odds with the other two objectives and provides interesting challenges. Higher performance can often be achieved by muddling abstraction layers or using non-intuitive low-level primitives. Also, more features and functionality, while useful in some situations, often complicates the API. Thus, this package will attempt to satisfy all the goals, but will defer to favoring maintainability when the performance or flexibility benefits are not significant enough.

|

||||

|

||||

|

||||

## Library Status ##

|

||||

|

||||

For the packages available, only some features are currently implemented:

|

||||

|

||||

| Package | Reader | Writer |

|

||||

| ------- | :----: | :----: |

|

||||

| brotli | :white_check_mark: | |

|

||||

| bzip2 | :white_check_mark: | :white_check_mark: |

|

||||

| flate | :white_check_mark: | |

|

||||

| xflate | :white_check_mark: | :white_check_mark: |

|

||||

|

||||

This library is in active development. As such, there are no guarantees about the stability of the API. The author reserves the right to arbitrarily break the API for any reason. When the library becomes more mature, it is planned to eventually conform to some strict versioning scheme like [Semantic Versioning](http://semver.org/).

|

||||

|

||||

However, in the meanwhile, this library does provide some basic API guarantees. For the types defined below, the method signatures are guaranteed to not change. Note that the author still reserves the right to change the fields within each ```Reader``` and ```Writer``` structs.

|

||||

```go

|

||||

type ReaderConfig struct { ... }

|

||||

type Reader struct { ... }

|

||||

func NewReader(io.Reader, *ReaderConfig) (*Reader, error) { ... }

|

||||

func (*Reader) Read([]byte) (int, error) { ... }

|

||||

func (*Reader) Close() error { ... }

|

||||

|

||||

type WriterConfig struct { ... }

|

||||

type Writer struct { ... }

|

||||

func NewWriter(io.Writer, *WriterConfig) (*Writer, error) { ... }

|

||||

func (*Writer) Write([]byte) (int, error) { ... }

|

||||

func (*Writer) Close() error { ... }

|

||||

```

|

||||

|

||||

To see what work still remains, see the [Task List](https://github.com/dsnet/compress/wiki/Task-List).

|

||||

|

||||

## Performance ##

|

||||

|

||||

See [Performance Metrics](https://github.com/dsnet/compress/wiki/Performance-Metrics).

|

||||

|

||||

|

||||

## Frequently Asked Questions ##

|

||||

|

||||

See [Frequently Asked Questions](https://github.com/dsnet/compress/wiki/Frequently-Asked-Questions).

|

||||

|

||||

|

||||

## Installation ##

|

||||

|

||||

Run the command:

|

||||

|

||||

```go get -u github.com/dsnet/compress```

|

||||

|

||||

This library requires `Go1.7` or higher in order to build.

|

||||

|

||||

|

||||

## Packages ##

|

||||

|

||||

| Package | Description |

|

||||

| :------ | :---------- |

|

||||

| [brotli](http://godoc.org/github.com/dsnet/compress/brotli) | Package brotli implements the Brotli format, described in RFC 7932. |

|

||||

| [bzip2](http://godoc.org/github.com/dsnet/compress/bzip2) | Package bzip2 implements the BZip2 compressed data format. |

|

||||

| [flate](http://godoc.org/github.com/dsnet/compress/flate) | Package flate implements the DEFLATE format, described in RFC 1951. |

|

||||

| [xflate](http://godoc.org/github.com/dsnet/compress/xflate) | Package xflate implements the XFLATE format, an random-access extension to DEFLATE. |

|

||||

74

vendor/github.com/dsnet/compress/api.go

generated

vendored

Normal file

74

vendor/github.com/dsnet/compress/api.go

generated

vendored

Normal file

@@ -0,0 +1,74 @@

|

||||

// Copyright 2015, Joe Tsai. All rights reserved.

|

||||

// Use of this source code is governed by a BSD-style

|

||||

// license that can be found in the LICENSE.md file.

|

||||

|

||||

// Package compress is a collection of compression libraries.

|

||||

package compress

|

||||

|

||||

import (

|

||||

"bufio"

|

||||

"io"

|

||||

|

||||

"github.com/dsnet/compress/internal/errors"

|

||||

)

|

||||

|

||||

// The Error interface identifies all compression related errors.

|

||||

type Error interface {

|

||||

error

|

||||

CompressError()

|

||||

|

||||

// IsDeprecated reports the use of a deprecated and unsupported feature.

|

||||

IsDeprecated() bool

|

||||

|

||||

// IsCorrupted reports whether the input stream was corrupted.

|

||||

IsCorrupted() bool

|

||||

}

|

||||

|

||||

var _ Error = errors.Error{}

|

||||

|

||||

// ByteReader is an interface accepted by all decompression Readers.

|

||||

// It guarantees that the decompressor never reads more data than is necessary

|

||||

// from the underlying io.Reader.

|

||||

type ByteReader interface {

|

||||

io.Reader

|

||||

io.ByteReader

|

||||

}

|

||||

|

||||

var _ ByteReader = (*bufio.Reader)(nil)

|

||||

|

||||

// BufferedReader is an interface accepted by all decompression Readers.

|

||||

// It guarantees that the decompressor never reads more data than is necessary

|

||||

// from the underlying io.Reader. Since BufferedReader allows a decompressor

|

||||

// to peek at bytes further along in the stream without advancing the read

|

||||

// pointer, decompression can experience a significant performance gain when

|

||||

// provided a reader that satisfies this interface. Thus, a decompressor will

|

||||

// prefer this interface over ByteReader for performance reasons.

|

||||

//

|

||||

// The bufio.Reader satisfies this interface.

|

||||

type BufferedReader interface {

|

||||

io.Reader

|

||||

|

||||

// Buffered returns the number of bytes currently buffered.

|

||||

//

|

||||

// This value becomes invalid following the next Read/Discard operation.

|

||||

Buffered() int

|

||||

|

||||

// Peek returns the next n bytes without advancing the reader.

|

||||

//

|

||||

// If Peek returns fewer than n bytes, it also returns an error explaining

|

||||

// why the peek is short. Peek must support peeking of at least 8 bytes.

|

||||

// If 0 <= n <= Buffered(), Peek is guaranteed to succeed without reading

|

||||

// from the underlying io.Reader.

|

||||

//

|

||||

// This result becomes invalid following the next Read/Discard operation.

|

||||

Peek(n int) ([]byte, error)

|

||||

|

||||

// Discard skips the next n bytes, returning the number of bytes discarded.

|

||||

//

|

||||

// If Discard skips fewer than n bytes, it also returns an error.

|

||||

// If 0 <= n <= Buffered(), Discard is guaranteed to succeed without reading

|

||||

// from the underlying io.Reader.

|

||||

Discard(n int) (int, error)

|

||||

}

|

||||

|

||||

var _ BufferedReader = (*bufio.Reader)(nil)

|

||||

110

vendor/github.com/dsnet/compress/bzip2/bwt.go

generated

vendored

Normal file

110

vendor/github.com/dsnet/compress/bzip2/bwt.go

generated

vendored

Normal file

@@ -0,0 +1,110 @@

|

||||

// Copyright 2015, Joe Tsai. All rights reserved.

|

||||

// Use of this source code is governed by a BSD-style

|

||||

// license that can be found in the LICENSE.md file.

|

||||

|

||||

package bzip2

|

||||

|

||||

import "github.com/dsnet/compress/bzip2/internal/sais"

|

||||

|

||||

// The Burrows-Wheeler Transform implementation used here is based on the

|

||||

// Suffix Array by Induced Sorting (SA-IS) methodology by Nong, Zhang, and Chan.

|

||||

// This implementation uses the sais algorithm originally written by Yuta Mori.

|

||||

//

|

||||

// The SA-IS algorithm runs in O(n) and outputs a Suffix Array. There is a

|

||||

// mathematical relationship between Suffix Arrays and the Burrows-Wheeler

|

||||

// Transform, such that a SA can be converted to a BWT in O(n) time.

|

||||

//

|

||||

// References:

|

||||

// http://www.hpl.hp.com/techreports/Compaq-DEC/SRC-RR-124.pdf

|

||||

// https://github.com/cscott/compressjs/blob/master/lib/BWT.js

|

||||

// https://www.quora.com/How-can-I-optimize-burrows-wheeler-transform-and-inverse-transform-to-work-in-O-n-time-O-n-space

|

||||

type burrowsWheelerTransform struct {

|

||||

buf []byte

|

||||

sa []int

|

||||

perm []uint32

|

||||

}

|

||||

|

||||

func (bwt *burrowsWheelerTransform) Encode(buf []byte) (ptr int) {

|

||||

if len(buf) == 0 {

|

||||

return -1

|

||||

}

|

||||

|

||||

// TODO(dsnet): Find a way to avoid the duplicate input string method.

|

||||

// We only need to do this because suffix arrays (by definition) only

|

||||

// operate non-wrapped suffixes of a string. On the other hand,

|

||||

// the BWT specifically used in bzip2 operate on a strings that wrap-around

|

||||

// when being sorted.

|

||||

|

||||

// Step 1: Concatenate the input string to itself so that we can use the

|

||||

// suffix array algorithm for bzip2's variant of BWT.

|

||||

n := len(buf)

|

||||

bwt.buf = append(append(bwt.buf[:0], buf...), buf...)

|

||||

if cap(bwt.sa) < 2*n {

|

||||

bwt.sa = make([]int, 2*n)

|

||||

}

|

||||

t := bwt.buf[:2*n]

|

||||

sa := bwt.sa[:2*n]

|

||||

|

||||

// Step 2: Compute the suffix array (SA). The input string, t, will not be

|

||||

// modified, while the results will be written to the output, sa.

|

||||

sais.ComputeSA(t, sa)

|

||||

|

||||

// Step 3: Convert the SA to a BWT. Since ComputeSA does not mutate the

|

||||

// input, we have two copies of the input; in buf and buf2. Thus, we write

|

||||

// the transformation to buf, while using buf2.

|

||||

var j int

|

||||

buf2 := t[n:]

|

||||

for _, i := range sa {

|

||||

if i < n {

|

||||

if i == 0 {

|

||||

ptr = j

|

||||

i = n

|

||||

}

|

||||

buf[j] = buf2[i-1]

|

||||

j++

|

||||

}

|

||||

}

|

||||

return ptr

|

||||

}

|

||||

|

||||

func (bwt *burrowsWheelerTransform) Decode(buf []byte, ptr int) {

|

||||

if len(buf) == 0 {

|

||||

return

|

||||

}

|

||||

|

||||

// Step 1: Compute cumm, where cumm[ch] reports the total number of

|

||||

// characters that precede the character ch in the alphabet.

|

||||

var cumm [256]int

|

||||

for _, v := range buf {

|

||||

cumm[v]++

|

||||

}

|

||||

var sum int

|

||||

for i, v := range cumm {

|

||||

cumm[i] = sum

|

||||

sum += v

|

||||

}

|

||||

|

||||

// Step 2: Compute perm, where perm[ptr] contains a pointer to the next

|

||||

// byte in buf and the next pointer in perm itself.

|

||||

if cap(bwt.perm) < len(buf) {

|

||||

bwt.perm = make([]uint32, len(buf))

|

||||

}

|

||||

perm := bwt.perm[:len(buf)]

|

||||

for i, b := range buf {

|

||||

perm[cumm[b]] = uint32(i)

|

||||

cumm[b]++

|

||||

}

|

||||

|

||||

// Step 3: Follow each pointer in perm to the next byte, starting with the

|

||||

// origin pointer.

|

||||

if cap(bwt.buf) < len(buf) {

|

||||

bwt.buf = make([]byte, len(buf))

|

||||

}

|

||||

buf2 := bwt.buf[:len(buf)]

|

||||

i := perm[ptr]

|

||||

for j := range buf2 {

|

||||

buf2[j] = buf[i]

|

||||

i = perm[i]

|

||||

}

|

||||

copy(buf, buf2)

|

||||

}

|

||||

110

vendor/github.com/dsnet/compress/bzip2/common.go

generated

vendored

Normal file

110

vendor/github.com/dsnet/compress/bzip2/common.go

generated

vendored

Normal file

@@ -0,0 +1,110 @@

|

||||

// Copyright 2015, Joe Tsai. All rights reserved.

|

||||

// Use of this source code is governed by a BSD-style

|

||||

// license that can be found in the LICENSE.md file.

|

||||

|

||||

// Package bzip2 implements the BZip2 compressed data format.

|

||||

//

|

||||

// Canonical C implementation:

|

||||

// http://bzip.org

|

||||

//

|

||||

// Unofficial format specification:

|

||||

// https://github.com/dsnet/compress/blob/master/doc/bzip2-format.pdf

|

||||

package bzip2

|

||||

|

||||

import (

|

||||

"fmt"

|

||||

"hash/crc32"

|

||||

|

||||

"github.com/dsnet/compress/internal"

|

||||

"github.com/dsnet/compress/internal/errors"

|

||||

)

|

||||

|

||||

// There does not exist a formal specification of the BZip2 format. As such,

|

||||

// much of this work is derived by either reverse engineering the original C

|

||||

// source code or using secondary sources.

|

||||

//

|

||||

// Significant amounts of fuzz testing is done to ensure that outputs from

|

||||

// this package is properly decoded by the C library. Furthermore, we test that

|

||||

// both this package and the C library agree about what inputs are invalid.

|

||||

//

|

||||

// Compression stack:

|

||||

// Run-length encoding 1 (RLE1)

|

||||

// Burrows-Wheeler transform (BWT)

|

||||

// Move-to-front transform (MTF)

|

||||

// Run-length encoding 2 (RLE2)

|

||||

// Prefix encoding (PE)

|

||||

//

|

||||

// References:

|

||||

// http://bzip.org/

|

||||

// https://en.wikipedia.org/wiki/Bzip2

|

||||

// https://code.google.com/p/jbzip2/

|

||||

|

||||

const (

|

||||

BestSpeed = 1

|

||||

BestCompression = 9

|

||||

DefaultCompression = 6

|

||||

)

|

||||

|

||||

const (

|

||||

hdrMagic = 0x425a // Hex of "BZ"

|

||||

blkMagic = 0x314159265359 // BCD of PI

|

||||

endMagic = 0x177245385090 // BCD of sqrt(PI)

|

||||

|

||||

blockSize = 100000

|

||||

)

|

||||

|

||||

func errorf(c int, f string, a ...interface{}) error {

|

||||

return errors.Error{Code: c, Pkg: "bzip2", Msg: fmt.Sprintf(f, a...)}

|

||||

}

|

||||

|

||||

func panicf(c int, f string, a ...interface{}) {

|

||||

errors.Panic(errorf(c, f, a...))

|

||||

}

|

||||

|

||||

// errWrap converts a lower-level errors.Error to be one from this package.

|

||||

// The replaceCode passed in will be used to replace the code for any errors

|

||||

// with the errors.Invalid code.

|

||||

//

|

||||

// For the Reader, set this to errors.Corrupted.

|

||||

// For the Writer, set this to errors.Internal.

|

||||

func errWrap(err error, replaceCode int) error {

|

||||

if cerr, ok := err.(errors.Error); ok {

|

||||

if errors.IsInvalid(cerr) {

|

||||

cerr.Code = replaceCode

|

||||

}

|

||||

err = errorf(cerr.Code, "%s", cerr.Msg)

|

||||

}

|

||||

return err

|

||||

}

|

||||

|

||||

var errClosed = errorf(errors.Closed, "")

|

||||

|

||||

// crc computes the CRC-32 used by BZip2.

|

||||

//

|

||||

// The CRC-32 computation in bzip2 treats bytes as having bits in big-endian

|

||||

// order. That is, the MSB is read before the LSB. Thus, we can use the

|

||||

// standard library version of CRC-32 IEEE with some minor adjustments.

|

||||

//

|

||||

// The byte array is used as an intermediate buffer to swap the bits of every

|

||||

// byte of the input.

|

||||

type crc struct {

|

||||

val uint32

|

||||

buf [256]byte

|

||||

}

|

||||

|

||||

// update computes the CRC-32 of appending buf to c.

|

||||

func (c *crc) update(buf []byte) {

|

||||

cval := internal.ReverseUint32(c.val)

|

||||

for len(buf) > 0 {

|

||||

n := len(buf)

|

||||

if n > len(c.buf) {

|

||||

n = len(c.buf)

|

||||

}

|

||||

for i, b := range buf[:n] {

|

||||

c.buf[i] = internal.ReverseLUT[b]

|

||||

}

|

||||

cval = crc32.Update(cval, crc32.IEEETable, c.buf[:n])

|

||||

buf = buf[n:]

|

||||

}

|

||||

c.val = internal.ReverseUint32(cval)

|

||||

}

|

||||

13

vendor/github.com/dsnet/compress/bzip2/fuzz_off.go

generated

vendored

Normal file

13

vendor/github.com/dsnet/compress/bzip2/fuzz_off.go

generated

vendored

Normal file

@@ -0,0 +1,13 @@

|

||||

// Copyright 2016, Joe Tsai. All rights reserved.

|

||||

// Use of this source code is governed by a BSD-style

|

||||

// license that can be found in the LICENSE.md file.

|

||||

|

||||

// +build !gofuzz

|

||||

|

||||

// This file exists to suppress fuzzing details from release builds.

|

||||

|

||||

package bzip2

|

||||

|

||||

type fuzzReader struct{}

|

||||

|

||||

func (*fuzzReader) updateChecksum(int64, uint32) {}

|

||||

77

vendor/github.com/dsnet/compress/bzip2/fuzz_on.go

generated

vendored

Normal file

77

vendor/github.com/dsnet/compress/bzip2/fuzz_on.go

generated

vendored

Normal file

@@ -0,0 +1,77 @@

|

||||

// Copyright 2016, Joe Tsai. All rights reserved.

|

||||

// Use of this source code is governed by a BSD-style

|

||||

// license that can be found in the LICENSE.md file.

|

||||

|

||||

// +build gofuzz

|

||||

|

||||

// This file exists to export internal implementation details for fuzz testing.

|

||||

|

||||

package bzip2

|

||||

|

||||

func ForwardBWT(buf []byte) (ptr int) {

|

||||

var bwt burrowsWheelerTransform

|

||||

return bwt.Encode(buf)

|

||||

}

|

||||

|

||||

func ReverseBWT(buf []byte, ptr int) {

|

||||

var bwt burrowsWheelerTransform

|

||||

bwt.Decode(buf, ptr)

|

||||

}

|

||||

|

||||

type fuzzReader struct {

|

||||

Checksums Checksums

|

||||

}

|

||||

|

||||

// updateChecksum updates Checksums.

|

||||

//

|

||||

// If a valid pos is provided, it appends the (pos, val) pair to the slice.

|

||||

// Otherwise, it will update the last record with the new value.

|

||||

func (fr *fuzzReader) updateChecksum(pos int64, val uint32) {

|

||||

if pos >= 0 {

|

||||

fr.Checksums = append(fr.Checksums, Checksum{pos, val})

|

||||

} else {

|

||||

fr.Checksums[len(fr.Checksums)-1].Value = val

|

||||

}

|

||||

}

|

||||

|

||||

type Checksum struct {

|

||||

Offset int64 // Bit offset of the checksum

|

||||

Value uint32 // Checksum value

|

||||

}

|

||||

|

||||

type Checksums []Checksum

|

||||

|

||||

// Apply overwrites all checksum fields in d with the ones in cs.

|

||||

func (cs Checksums) Apply(d []byte) []byte {

|

||||

d = append([]byte(nil), d...)

|

||||

for _, c := range cs {

|

||||

setU32(d, c.Offset, c.Value)

|

||||

}

|

||||

return d

|

||||

}

|

||||

|

||||

func setU32(d []byte, pos int64, val uint32) {

|

||||

for i := uint(0); i < 32; i++ {

|

||||

bpos := uint64(pos) + uint64(i)

|

||||

d[bpos/8] &= ^byte(1 << (7 - bpos%8))

|

||||

d[bpos/8] |= byte(val>>(31-i)) << (7 - bpos%8)

|

||||

}

|

||||

}

|

||||

|

||||

// Verify checks that all checksum fields in d matches those in cs.

|

||||

func (cs Checksums) Verify(d []byte) bool {

|

||||

for _, c := range cs {

|

||||

if getU32(d, c.Offset) != c.Value {

|

||||

return false

|

||||

}

|

||||

}

|

||||

return true

|

||||

}

|

||||

|

||||

func getU32(d []byte, pos int64) (val uint32) {

|

||||

for i := uint(0); i < 32; i++ {

|

||||

bpos := uint64(pos) + uint64(i)

|

||||

val |= (uint32(d[bpos/8] >> (7 - bpos%8))) << (31 - i)

|

||||

}

|

||||

return val

|

||||

}

|

||||

28

vendor/github.com/dsnet/compress/bzip2/internal/sais/common.go

generated

vendored

Normal file

28

vendor/github.com/dsnet/compress/bzip2/internal/sais/common.go

generated

vendored

Normal file

@@ -0,0 +1,28 @@

|

||||

// Copyright 2015, Joe Tsai. All rights reserved.

|

||||

// Use of this source code is governed by a BSD-style

|

||||

// license that can be found in the LICENSE.md file.

|

||||

|

||||

// Package sais implements a linear time suffix array algorithm.

|

||||

package sais

|

||||

|

||||

//go:generate go run sais_gen.go byte sais_byte.go

|

||||

//go:generate go run sais_gen.go int sais_int.go

|

||||

|

||||

// This package ports the C sais implementation by Yuta Mori. The ports are

|

||||

// located in sais_byte.go and sais_int.go, which are identical to each other

|

||||

// except for the types. Since Go does not support generics, we use generators to

|

||||

// create the two files.

|

||||

//

|

||||

// References:

|

||||

// https://sites.google.com/site/yuta256/sais

|

||||

// https://www.researchgate.net/publication/221313676_Linear_Time_Suffix_Array_Construction_Using_D-Critical_Substrings

|

||||

// https://www.researchgate.net/publication/224176324_Two_Efficient_Algorithms_for_Linear_Time_Suffix_Array_Construction

|

||||

|

||||

// ComputeSA computes the suffix array of t and places the result in sa.

|

||||

// Both t and sa must be the same length.

|

||||

func ComputeSA(t []byte, sa []int) {

|

||||

if len(sa) != len(t) {

|

||||

panic("mismatching sizes")

|

||||

}

|

||||

computeSA_byte(t, sa, 0, len(t), 256)

|

||||

}

|

||||

661

vendor/github.com/dsnet/compress/bzip2/internal/sais/sais_byte.go

generated

vendored

Normal file

661

vendor/github.com/dsnet/compress/bzip2/internal/sais/sais_byte.go

generated

vendored

Normal file

@@ -0,0 +1,661 @@

|

||||

// Copyright 2015, Joe Tsai. All rights reserved.

|

||||

// Use of this source code is governed by a BSD-style

|

||||

// license that can be found in the LICENSE.md file.

|

||||

|

||||

// Code generated by sais_gen.go. DO NOT EDIT.

|

||||

|

||||

// ====================================================

|

||||

// Copyright (c) 2008-2010 Yuta Mori All Rights Reserved.

|

||||

//

|

||||

// Permission is hereby granted, free of charge, to any person

|

||||

// obtaining a copy of this software and associated documentation

|

||||

// files (the "Software"), to deal in the Software without

|

||||

// restriction, including without limitation the rights to use,

|

||||

// copy, modify, merge, publish, distribute, sublicense, and/or sell

|

||||

// copies of the Software, and to permit persons to whom the

|

||||

// Software is furnished to do so, subject to the following

|

||||

// conditions:

|

||||

//

|

||||

// The above copyright notice and this permission notice shall be

|

||||

// included in all copies or substantial portions of the Software.

|

||||

//

|

||||

// THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND,

|

||||

// EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES

|

||||

// OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND

|

||||

// NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT

|

||||

// HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY,

|

||||

// WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING

|

||||

// FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR

|

||||

// OTHER DEALINGS IN THE SOFTWARE.

|

||||

// ====================================================

|

||||

|

||||

package sais

|

||||

|

||||

func getCounts_byte(T []byte, C []int, n, k int) {

|

||||

var i int

|

||||

for i = 0; i < k; i++ {

|

||||

C[i] = 0

|

||||

}

|

||||

for i = 0; i < n; i++ {

|

||||

C[T[i]]++

|

||||

}

|

||||

}

|

||||

|

||||

func getBuckets_byte(C, B []int, k int, end bool) {

|

||||

var i, sum int

|

||||

if end {

|

||||

for i = 0; i < k; i++ {

|

||||

sum += C[i]

|

||||

B[i] = sum

|

||||

}

|

||||

} else {

|

||||

for i = 0; i < k; i++ {

|

||||

sum += C[i]

|

||||

B[i] = sum - C[i]

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func sortLMS1_byte(T []byte, SA, C, B []int, n, k int) {

|

||||

var b, i, j int

|

||||

var c0, c1 int

|

||||

|

||||

// Compute SAl.

|

||||

if &C[0] == &B[0] {

|

||||

getCounts_byte(T, C, n, k)

|

||||

}

|

||||

getBuckets_byte(C, B, k, false) // Find starts of buckets

|

||||

j = n - 1

|

||||

c1 = int(T[j])

|

||||

b = B[c1]

|

||||

j--

|

||||

if int(T[j]) < c1 {

|

||||

SA[b] = ^j

|

||||

} else {

|

||||

SA[b] = j

|

||||

}

|

||||

b++

|

||||

for i = 0; i < n; i++ {

|

||||

if j = SA[i]; j > 0 {

|

||||

if c0 = int(T[j]); c0 != c1 {

|

||||

B[c1] = b

|

||||

c1 = c0

|

||||

b = B[c1]

|

||||

}

|

||||

j--

|

||||

if int(T[j]) < c1 {

|

||||

SA[b] = ^j

|

||||

} else {

|

||||

SA[b] = j

|

||||

}

|

||||

b++

|

||||

SA[i] = 0

|

||||

} else if j < 0 {

|

||||

SA[i] = ^j

|

||||

}

|

||||

}

|

||||

|

||||

// Compute SAs.

|

||||

if &C[0] == &B[0] {

|

||||

getCounts_byte(T, C, n, k)

|

||||

}

|

||||

getBuckets_byte(C, B, k, true) // Find ends of buckets

|

||||

c1 = 0

|

||||

b = B[c1]

|

||||

for i = n - 1; i >= 0; i-- {

|

||||

if j = SA[i]; j > 0 {

|

||||

if c0 = int(T[j]); c0 != c1 {

|

||||

B[c1] = b

|

||||

c1 = c0

|

||||

b = B[c1]

|

||||

}

|

||||

j--

|

||||

b--

|

||||

if int(T[j]) > c1 {

|

||||

SA[b] = ^(j + 1)

|

||||

} else {

|

||||

SA[b] = j

|

||||

}

|

||||

SA[i] = 0

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func postProcLMS1_byte(T []byte, SA []int, n, m int) int {

|

||||

var i, j, p, q, plen, qlen, name int

|

||||

var c0, c1 int

|

||||

var diff bool

|

||||

|

||||

// Compact all the sorted substrings into the first m items of SA.

|

||||

// 2*m must be not larger than n (provable).

|

||||

for i = 0; SA[i] < 0; i++ {

|

||||

SA[i] = ^SA[i]

|

||||

}

|

||||

if i < m {

|

||||

for j, i = i, i+1; ; i++ {

|

||||

if p = SA[i]; p < 0 {

|

||||

SA[j] = ^p

|

||||

j++

|

||||

SA[i] = 0

|

||||

if j == m {

|

||||

break

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

// Store the length of all substrings.

|

||||

i = n - 1

|

||||

j = n - 1

|

||||

c0 = int(T[n-1])

|

||||

for {

|

||||

c1 = c0

|

||||

if i--; i < 0 {

|

||||

break

|

||||

}

|

||||

if c0 = int(T[i]); c0 < c1 {

|

||||

break

|

||||

}

|

||||

}

|

||||

for i >= 0 {

|

||||

for {

|

||||

c1 = c0

|

||||

if i--; i < 0 {

|

||||

break

|

||||

}

|

||||

if c0 = int(T[i]); c0 > c1 {

|

||||

break

|

||||

}

|

||||

}

|

||||

if i >= 0 {

|

||||

SA[m+((i+1)>>1)] = j - i

|

||||

j = i + 1

|

||||

for {

|

||||

c1 = c0

|

||||

if i--; i < 0 {

|

||||

break

|

||||

}

|

||||

if c0 = int(T[i]); c0 < c1 {

|

||||

break

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

// Find the lexicographic names of all substrings.

|

||||

name = 0

|

||||

qlen = 0

|

||||

for i, q = 0, n; i < m; i++ {

|

||||

p = SA[i]

|

||||

plen = SA[m+(p>>1)]

|

||||

diff = true

|

||||

if (plen == qlen) && ((q + plen) < n) {

|

||||

for j = 0; (j < plen) && (T[p+j] == T[q+j]); j++ {

|

||||

}

|

||||

if j == plen {

|

||||

diff = false

|

||||

}

|

||||

}

|

||||

if diff {

|

||||

name++

|

||||

q = p

|

||||

qlen = plen

|

||||

}

|

||||

SA[m+(p>>1)] = name

|

||||

}

|

||||

return name

|

||||

}

|

||||

|

||||

func sortLMS2_byte(T []byte, SA, C, B, D []int, n, k int) {

|

||||

var b, i, j, t, d int

|

||||

var c0, c1 int

|

||||

|

||||

// Compute SAl.

|

||||

getBuckets_byte(C, B, k, false) // Find starts of buckets

|

||||

j = n - 1

|

||||

c1 = int(T[j])

|

||||

b = B[c1]

|

||||

j--

|

||||

if int(T[j]) < c1 {

|

||||

t = 1

|

||||

} else {

|

||||

t = 0

|

||||

}

|

||||

j += n

|

||||

if t&1 > 0 {

|

||||

SA[b] = ^j

|

||||

} else {

|

||||

SA[b] = j

|

||||

}

|

||||

b++

|

||||

for i, d = 0, 0; i < n; i++ {

|

||||

if j = SA[i]; j > 0 {

|

||||

if n <= j {

|

||||

d += 1

|

||||

j -= n

|

||||

}

|

||||

if c0 = int(T[j]); c0 != c1 {

|

||||

B[c1] = b

|

||||

c1 = c0

|

||||

b = B[c1]

|

||||

}

|

||||

j--

|

||||

t = int(c0) << 1

|

||||

if int(T[j]) < c1 {

|

||||

t |= 1

|

||||

}

|

||||

if D[t] != d {

|

||||

j += n

|

||||

D[t] = d

|

||||

}

|

||||

if t&1 > 0 {

|

||||

SA[b] = ^j

|

||||

} else {

|

||||

SA[b] = j

|

||||

}

|

||||

b++

|

||||

SA[i] = 0

|

||||

} else if j < 0 {

|

||||

SA[i] = ^j

|

||||

}

|

||||

}

|

||||

for i = n - 1; 0 <= i; i-- {

|

||||

if SA[i] > 0 {

|

||||

if SA[i] < n {

|

||||

SA[i] += n

|

||||

for j = i - 1; SA[j] < n; j-- {

|

||||

}

|

||||

SA[j] -= n

|

||||

i = j

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

// Compute SAs.

|

||||

getBuckets_byte(C, B, k, true) // Find ends of buckets

|

||||

c1 = 0

|

||||

b = B[c1]

|

||||

for i, d = n-1, d+1; i >= 0; i-- {

|

||||

if j = SA[i]; j > 0 {

|

||||

if n <= j {

|

||||

d += 1

|

||||

j -= n

|

||||

}

|

||||

if c0 = int(T[j]); c0 != c1 {

|

||||

B[c1] = b

|

||||

c1 = c0

|

||||

b = B[c1]

|

||||

}

|

||||

j--

|

||||

t = int(c0) << 1

|

||||

if int(T[j]) > c1 {

|

||||

t |= 1

|

||||

}

|

||||

if D[t] != d {

|

||||

j += n

|

||||

D[t] = d

|

||||

}

|

||||

b--

|

||||

if t&1 > 0 {

|

||||

SA[b] = ^(j + 1)

|

||||

} else {

|

||||

SA[b] = j

|

||||

}

|

||||

SA[i] = 0

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func postProcLMS2_byte(SA []int, n, m int) int {

|

||||

var i, j, d, name int

|

||||

|

||||

// Compact all the sorted LMS substrings into the first m items of SA.

|

||||

name = 0

|

||||

for i = 0; SA[i] < 0; i++ {

|

||||

j = ^SA[i]

|

||||

if n <= j {

|

||||

name += 1

|

||||

}

|

||||

SA[i] = j

|

||||

}

|

||||

if i < m {

|

||||

for d, i = i, i+1; ; i++ {

|

||||

if j = SA[i]; j < 0 {

|

||||

j = ^j

|

||||

if n <= j {

|

||||

name += 1

|

||||

}

|

||||

SA[d] = j

|

||||

d++

|

||||

SA[i] = 0

|

||||

if d == m {

|

||||

break

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

if name < m {

|

||||

// Store the lexicographic names.

|

||||

for i, d = m-1, name+1; 0 <= i; i-- {

|

||||

if j = SA[i]; n <= j {

|

||||

j -= n

|

||||

d--

|

||||

}

|

||||

SA[m+(j>>1)] = d

|

||||

}

|

||||

} else {

|

||||

// Unset flags.

|

||||

for i = 0; i < m; i++ {

|

||||

if j = SA[i]; n <= j {

|

||||

j -= n

|

||||

SA[i] = j

|

||||

}

|

||||

}

|

||||

}

|

||||

return name

|

||||

}

|

||||

|

||||

func induceSA_byte(T []byte, SA, C, B []int, n, k int) {

|

||||

var b, i, j int

|

||||

var c0, c1 int

|

||||

|

||||

// Compute SAl.

|

||||

if &C[0] == &B[0] {

|

||||

getCounts_byte(T, C, n, k)

|

||||

}

|

||||

getBuckets_byte(C, B, k, false) // Find starts of buckets

|

||||

j = n - 1

|

||||

c1 = int(T[j])

|

||||

b = B[c1]

|

||||

if j > 0 && int(T[j-1]) < c1 {

|

||||

SA[b] = ^j

|

||||

} else {

|

||||

SA[b] = j

|

||||

}

|

||||

b++

|

||||

for i = 0; i < n; i++ {

|

||||

j = SA[i]

|

||||

SA[i] = ^j

|

||||

if j > 0 {

|

||||

j--

|

||||

if c0 = int(T[j]); c0 != c1 {

|

||||

B[c1] = b

|

||||

c1 = c0

|

||||

b = B[c1]

|

||||

}

|

||||

if j > 0 && int(T[j-1]) < c1 {

|

||||

SA[b] = ^j

|

||||

} else {

|

||||

SA[b] = j

|

||||

}

|

||||

b++

|

||||

}

|

||||

}

|

||||

|

||||

// Compute SAs.

|

||||

if &C[0] == &B[0] {

|

||||

getCounts_byte(T, C, n, k)

|

||||

}

|

||||

getBuckets_byte(C, B, k, true) // Find ends of buckets

|

||||

c1 = 0

|

||||

b = B[c1]

|

||||

for i = n - 1; i >= 0; i-- {

|

||||

if j = SA[i]; j > 0 {

|

||||

j--

|

||||

if c0 = int(T[j]); c0 != c1 {

|

||||

B[c1] = b

|

||||

c1 = c0

|

||||

b = B[c1]

|

||||

}

|

||||

b--

|

||||

if (j == 0) || (int(T[j-1]) > c1) {

|

||||

SA[b] = ^j

|

||||

} else {

|

||||

SA[b] = j

|

||||

}

|

||||

} else {

|

||||

SA[i] = ^j

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func computeSA_byte(T []byte, SA []int, fs, n, k int) {

|

||||

const (

|

||||

minBucketSize = 512

|

||||

sortLMS2Limit = 0x3fffffff

|

||||

)

|

||||

|

||||

var C, B, D, RA []int

|

||||

var bo int // Offset of B relative to SA

|

||||

var b, i, j, m, p, q, name, newfs int

|

||||

var c0, c1 int

|

||||

var flags uint

|

||||

|

||||

if k <= minBucketSize {

|

||||

C = make([]int, k)

|

||||

if k <= fs {

|

||||

bo = n + fs - k

|

||||

B = SA[bo:]

|

||||

flags = 1

|

||||

} else {

|

||||

B = make([]int, k)

|

||||

flags = 3

|

||||

}

|

||||

} else if k <= fs {

|

||||

C = SA[n+fs-k:]

|

||||

if k <= fs-k {

|

||||

bo = n + fs - 2*k

|

||||

B = SA[bo:]

|

||||

flags = 0

|

||||

} else if k <= 4*minBucketSize {

|

||||

B = make([]int, k)

|

||||

flags = 2

|

||||

} else {

|

||||

B = C

|

||||

flags = 8

|

||||

}

|

||||

} else {

|

||||

C = make([]int, k)

|

||||

B = C

|

||||

flags = 4 | 8

|

||||

}

|

||||

if n <= sortLMS2Limit && 2 <= (n/k) {

|

||||

if flags&1 > 0 {

|

||||

if 2*k <= fs-k {

|

||||

flags |= 32

|

||||

} else {

|

||||

flags |= 16

|

||||

}

|

||||

} else if flags == 0 && 2*k <= (fs-2*k) {

|

||||

flags |= 32

|

||||

}

|

||||

}

|

||||

|

||||

// Stage 1: Reduce the problem by at least 1/2.

|

||||

// Sort all the LMS-substrings.

|

||||

getCounts_byte(T, C, n, k)

|

||||

getBuckets_byte(C, B, k, true) // Find ends of buckets

|

||||

for i = 0; i < n; i++ {

|

||||

SA[i] = 0

|

||||

}

|

||||

b = -1

|

||||

i = n - 1

|

||||

j = n

|

||||

m = 0

|

||||

c0 = int(T[n-1])

|

||||

for {

|

||||

c1 = c0

|

||||

if i--; i < 0 {

|

||||

break

|

||||

}

|

||||

if c0 = int(T[i]); c0 < c1 {

|

||||

break

|

||||

}

|

||||

}

|

||||

for i >= 0 {

|

||||

for {

|

||||

c1 = c0

|

||||

if i--; i < 0 {

|

||||

break

|

||||

}

|

||||

if c0 = int(T[i]); c0 > c1 {

|

||||

break

|

||||

}

|

||||

}

|

||||

if i >= 0 {

|

||||

if b >= 0 {

|

||||

SA[b] = j

|

||||

}

|

||||

B[c1]--

|

||||

b = B[c1]

|

||||

j = i

|

||||

m++

|

||||

for {

|

||||

c1 = c0

|

||||

if i--; i < 0 {

|

||||

break

|

||||

}

|

||||

if c0 = int(T[i]); c0 < c1 {

|

||||

break

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

if m > 1 {

|

||||

if flags&(16|32) > 0 {

|

||||

if flags&16 > 0 {

|

||||

D = make([]int, 2*k)

|

||||

} else {

|

||||

D = SA[bo-2*k:]

|

||||

}

|

||||

B[T[j+1]]++

|

||||

for i, j = 0, 0; i < k; i++ {

|

||||

j += C[i]

|

||||

if B[i] != j {

|

||||

SA[B[i]] += n

|

||||

}

|

||||

D[i] = 0

|

||||

D[i+k] = 0

|

||||

}

|

||||

sortLMS2_byte(T, SA, C, B, D, n, k)

|

||||

name = postProcLMS2_byte(SA, n, m)

|

||||

} else {

|

||||

sortLMS1_byte(T, SA, C, B, n, k)

|

||||

name = postProcLMS1_byte(T, SA, n, m)

|

||||

}

|

||||

} else if m == 1 {

|

||||

SA[b] = j + 1

|

||||

name = 1

|

||||

} else {

|

||||

name = 0

|

||||

}

|

||||

|

||||

// Stage 2: Solve the reduced problem.

|

||||

// Recurse if names are not yet unique.

|

||||

if name < m {

|

||||

newfs = n + fs - 2*m

|

||||

if flags&(1|4|8) == 0 {

|

||||

if k+name <= newfs {

|

||||

newfs -= k

|

||||

} else {

|

||||

flags |= 8

|

||||

}

|

||||

}

|

||||

RA = SA[m+newfs:]

|

||||

for i, j = m+(n>>1)-1, m-1; m <= i; i-- {

|

||||

if SA[i] != 0 {

|

||||

RA[j] = SA[i] - 1

|

||||

j--

|

||||

}

|

||||

}

|

||||

computeSA_int(RA, SA, newfs, m, name)

|

||||

|

||||

i = n - 1

|

||||

j = m - 1

|

||||

c0 = int(T[n-1])

|

||||

for {

|

||||

c1 = c0

|

||||

if i--; i < 0 {

|

||||

break

|

||||

}

|

||||

if c0 = int(T[i]); c0 < c1 {

|

||||

break

|

||||

}

|

||||

}

|

||||

for i >= 0 {

|

||||

for {

|

||||

c1 = c0

|

||||

if i--; i < 0 {

|

||||

break

|

||||

}

|

||||

if c0 = int(T[i]); c0 > c1 {

|

||||

break

|

||||

}

|

||||

}

|

||||

if i >= 0 {

|

||||

RA[j] = i + 1

|

||||

j--

|

||||

for {

|

||||

c1 = c0

|

||||

if i--; i < 0 {

|

||||

break

|

||||

}

|

||||

if c0 = int(T[i]); c0 < c1 {

|

||||

break

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

for i = 0; i < m; i++ {

|

||||

SA[i] = RA[SA[i]]

|

||||

}

|

||||

if flags&4 > 0 {

|

||||

B = make([]int, k)

|

||||

C = B

|

||||

}

|

||||

if flags&2 > 0 {

|

||||

B = make([]int, k)

|

||||

}

|

||||

}

|

||||

|

||||

// Stage 3: Induce the result for the original problem.

|

||||

if flags&8 > 0 {

|

||||

getCounts_byte(T, C, n, k)

|

||||

}

|

||||

// Put all left-most S characters into their buckets.

|

||||

if m > 1 {

|

||||

getBuckets_byte(C, B, k, true) // Find ends of buckets

|

||||

i = m - 1

|

||||

j = n

|

||||

p = SA[m-1]

|

||||

c1 = int(T[p])

|

||||

for {

|

||||

c0 = c1

|

||||

q = B[c0]

|

||||

for q < j {

|

||||

j--

|

||||

SA[j] = 0

|

||||

}

|

||||

for {

|

||||

j--

|

||||

SA[j] = p

|

||||

if i--; i < 0 {

|

||||

break

|

||||

}

|

||||

p = SA[i]

|

||||

if c1 = int(T[p]); c1 != c0 {

|

||||

break

|

||||

}

|

||||

}

|

||||

if i < 0 {

|

||||

break

|

||||

}

|

||||

}

|

||||

for j > 0 {

|

||||

j--

|

||||

SA[j] = 0

|

||||

}

|

||||

}

|

||||

induceSA_byte(T, SA, C, B, n, k)

|

||||

}

|

||||

661

vendor/github.com/dsnet/compress/bzip2/internal/sais/sais_int.go

generated

vendored

Normal file

661

vendor/github.com/dsnet/compress/bzip2/internal/sais/sais_int.go

generated

vendored

Normal file

@@ -0,0 +1,661 @@

|

||||

// Copyright 2015, Joe Tsai. All rights reserved.

|

||||

// Use of this source code is governed by a BSD-style

|

||||

// license that can be found in the LICENSE.md file.

|

||||

|

||||

// Code generated by sais_gen.go. DO NOT EDIT.

|

||||

|

||||

// ====================================================

|

||||

// Copyright (c) 2008-2010 Yuta Mori All Rights Reserved.

|

||||

//

|

||||

// Permission is hereby granted, free of charge, to any person

|

||||

// obtaining a copy of this software and associated documentation

|

||||

// files (the "Software"), to deal in the Software without

|

||||

// restriction, including without limitation the rights to use,

|

||||

// copy, modify, merge, publish, distribute, sublicense, and/or sell

|

||||

// copies of the Software, and to permit persons to whom the

|

||||

// Software is furnished to do so, subject to the following

|

||||

// conditions:

|

||||

//

|

||||

// The above copyright notice and this permission notice shall be

|

||||

// included in all copies or substantial portions of the Software.

|

||||

//

|

||||

// THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND,

|

||||

// EXPRESS OR IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES

|

||||

// OF MERCHANTABILITY, FITNESS FOR A PARTICULAR PURPOSE AND

|

||||

// NONINFRINGEMENT. IN NO EVENT SHALL THE AUTHORS OR COPYRIGHT

|

||||

// HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER LIABILITY,

|

||||

// WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING

|

||||

// FROM, OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR

|

||||

// OTHER DEALINGS IN THE SOFTWARE.

|

||||

// ====================================================

|

||||

|

||||

package sais

|

||||

|

||||

func getCounts_int(T []int, C []int, n, k int) {

|

||||

var i int

|

||||

for i = 0; i < k; i++ {

|

||||

C[i] = 0

|

||||

}

|

||||

for i = 0; i < n; i++ {

|

||||

C[T[i]]++

|

||||

}

|

||||

}

|

||||

|

||||

func getBuckets_int(C, B []int, k int, end bool) {

|

||||

var i, sum int

|

||||

if end {

|

||||

for i = 0; i < k; i++ {

|

||||

sum += C[i]

|

||||

B[i] = sum

|

||||

}

|

||||

} else {

|

||||

for i = 0; i < k; i++ {

|

||||

sum += C[i]

|

||||

B[i] = sum - C[i]

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func sortLMS1_int(T []int, SA, C, B []int, n, k int) {

|

||||

var b, i, j int

|

||||

var c0, c1 int

|

||||

|

||||

// Compute SAl.

|

||||

if &C[0] == &B[0] {

|

||||

getCounts_int(T, C, n, k)

|

||||

}

|

||||

getBuckets_int(C, B, k, false) // Find starts of buckets

|

||||

j = n - 1

|

||||

c1 = int(T[j])

|

||||

b = B[c1]

|

||||

j--

|

||||

if int(T[j]) < c1 {

|

||||

SA[b] = ^j

|

||||

} else {

|

||||

SA[b] = j

|

||||

}

|

||||

b++

|

||||

for i = 0; i < n; i++ {

|

||||

if j = SA[i]; j > 0 {

|

||||

if c0 = int(T[j]); c0 != c1 {

|

||||

B[c1] = b

|

||||

c1 = c0

|

||||

b = B[c1]

|

||||

}

|

||||

j--

|

||||

if int(T[j]) < c1 {

|

||||

SA[b] = ^j

|

||||

} else {

|

||||

SA[b] = j

|

||||

}

|

||||

b++

|

||||

SA[i] = 0

|

||||

} else if j < 0 {

|

||||

SA[i] = ^j

|

||||

}

|

||||

}

|

||||

|

||||

// Compute SAs.

|

||||

if &C[0] == &B[0] {

|

||||

getCounts_int(T, C, n, k)

|

||||

}

|

||||

getBuckets_int(C, B, k, true) // Find ends of buckets

|

||||

c1 = 0

|

||||

b = B[c1]

|

||||

for i = n - 1; i >= 0; i-- {

|

||||

if j = SA[i]; j > 0 {

|

||||

if c0 = int(T[j]); c0 != c1 {

|

||||

B[c1] = b

|

||||

c1 = c0

|

||||

b = B[c1]

|

||||

}

|

||||

j--

|

||||

b--

|

||||

if int(T[j]) > c1 {

|

||||

SA[b] = ^(j + 1)

|

||||

} else {

|

||||

SA[b] = j

|

||||

}

|

||||

SA[i] = 0

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func postProcLMS1_int(T []int, SA []int, n, m int) int {

|

||||

var i, j, p, q, plen, qlen, name int

|

||||

var c0, c1 int

|

||||

var diff bool

|

||||

|

||||

// Compact all the sorted substrings into the first m items of SA.

|

||||

// 2*m must be not larger than n (provable).

|

||||

for i = 0; SA[i] < 0; i++ {

|

||||

SA[i] = ^SA[i]

|

||||

}

|

||||

if i < m {

|

||||

for j, i = i, i+1; ; i++ {

|

||||

if p = SA[i]; p < 0 {

|

||||

SA[j] = ^p

|

||||

j++

|

||||

SA[i] = 0

|

||||

if j == m {

|

||||

break

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

// Store the length of all substrings.

|

||||

i = n - 1

|

||||

j = n - 1

|

||||

c0 = int(T[n-1])

|

||||

for {

|

||||

c1 = c0

|

||||

if i--; i < 0 {

|

||||

break

|

||||

}

|

||||

if c0 = int(T[i]); c0 < c1 {

|

||||

break

|

||||

}

|

||||

}

|

||||

for i >= 0 {

|

||||

for {

|

||||

c1 = c0

|

||||

if i--; i < 0 {

|

||||

break

|

||||

}

|

||||

if c0 = int(T[i]); c0 > c1 {

|

||||

break

|

||||

}

|

||||

}

|

||||

if i >= 0 {

|

||||

SA[m+((i+1)>>1)] = j - i

|

||||

j = i + 1

|

||||

for {

|

||||

c1 = c0

|

||||

if i--; i < 0 {

|

||||

break

|

||||

}

|

||||

if c0 = int(T[i]); c0 < c1 {

|

||||

break

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

// Find the lexicographic names of all substrings.

|

||||

name = 0

|

||||

qlen = 0

|

||||

for i, q = 0, n; i < m; i++ {

|

||||

p = SA[i]

|

||||

plen = SA[m+(p>>1)]

|

||||

diff = true

|

||||

if (plen == qlen) && ((q + plen) < n) {

|

||||

for j = 0; (j < plen) && (T[p+j] == T[q+j]); j++ {

|

||||

}

|

||||

if j == plen {

|

||||

diff = false

|

||||

}

|

||||

}

|

||||

if diff {

|

||||

name++

|

||||

q = p

|

||||

qlen = plen

|

||||

}

|

||||

SA[m+(p>>1)] = name

|

||||

}

|

||||

return name

|

||||

}

|

||||

|

||||

func sortLMS2_int(T []int, SA, C, B, D []int, n, k int) {

|

||||

var b, i, j, t, d int

|

||||

var c0, c1 int

|

||||

|

||||

// Compute SAl.

|

||||

getBuckets_int(C, B, k, false) // Find starts of buckets

|

||||

j = n - 1

|

||||

c1 = int(T[j])

|

||||

b = B[c1]

|

||||

j--

|

||||

if int(T[j]) < c1 {

|

||||

t = 1

|

||||

} else {

|

||||

t = 0

|

||||

}

|

||||

j += n

|

||||

if t&1 > 0 {

|

||||

SA[b] = ^j

|

||||

} else {

|

||||

SA[b] = j

|

||||

}

|

||||

b++

|

||||

for i, d = 0, 0; i < n; i++ {

|

||||

if j = SA[i]; j > 0 {

|

||||

if n <= j {

|

||||

d += 1

|

||||

j -= n

|

||||

}

|

||||

if c0 = int(T[j]); c0 != c1 {

|

||||

B[c1] = b

|

||||

c1 = c0

|

||||

b = B[c1]

|

||||

}

|

||||

j--

|

||||

t = int(c0) << 1

|

||||

if int(T[j]) < c1 {

|

||||

t |= 1

|

||||

}

|

||||

if D[t] != d {

|

||||

j += n

|

||||

D[t] = d

|

||||

}

|

||||

if t&1 > 0 {

|

||||

SA[b] = ^j

|

||||

} else {

|

||||

SA[b] = j

|

||||

}

|

||||

b++

|

||||

SA[i] = 0

|

||||

} else if j < 0 {

|

||||

SA[i] = ^j

|

||||

}

|

||||

}

|

||||

for i = n - 1; 0 <= i; i-- {

|

||||

if SA[i] > 0 {

|

||||

if SA[i] < n {

|

||||

SA[i] += n

|

||||

for j = i - 1; SA[j] < n; j-- {

|

||||

}

|

||||

SA[j] -= n

|

||||

i = j

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

// Compute SAs.

|

||||

getBuckets_int(C, B, k, true) // Find ends of buckets

|

||||

c1 = 0

|

||||

b = B[c1]

|

||||

for i, d = n-1, d+1; i >= 0; i-- {

|

||||

if j = SA[i]; j > 0 {

|

||||

if n <= j {

|

||||

d += 1

|

||||

j -= n

|

||||

}

|

||||

if c0 = int(T[j]); c0 != c1 {

|

||||

B[c1] = b

|

||||

c1 = c0

|

||||

b = B[c1]

|

||||

}

|

||||

j--

|

||||

t = int(c0) << 1

|

||||

if int(T[j]) > c1 {

|

||||

t |= 1

|

||||

}

|

||||

if D[t] != d {

|

||||

j += n

|

||||

D[t] = d

|

||||

}

|

||||

b--

|

||||

if t&1 > 0 {

|

||||

SA[b] = ^(j + 1)

|

||||

} else {

|

||||

SA[b] = j

|

||||

}

|

||||

SA[i] = 0

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func postProcLMS2_int(SA []int, n, m int) int {

|

||||

var i, j, d, name int

|

||||

|

||||

// Compact all the sorted LMS substrings into the first m items of SA.

|

||||

name = 0

|

||||

for i = 0; SA[i] < 0; i++ {

|

||||

j = ^SA[i]

|

||||

if n <= j {

|

||||

name += 1

|

||||

}

|

||||

SA[i] = j

|

||||

}

|

||||

if i < m {

|

||||

for d, i = i, i+1; ; i++ {

|

||||

if j = SA[i]; j < 0 {

|

||||

j = ^j

|

||||

if n <= j {

|

||||

name += 1

|

||||

}

|

||||

SA[d] = j

|

||||

d++

|

||||

SA[i] = 0

|

||||

if d == m {

|

||||

break

|

||||

}

|

||||

}

|

||||

}

|

||||

}

|

||||

if name < m {

|

||||

// Store the lexicographic names.

|

||||

for i, d = m-1, name+1; 0 <= i; i-- {

|

||||

if j = SA[i]; n <= j {

|

||||

j -= n

|

||||

d--

|

||||

}

|

||||

SA[m+(j>>1)] = d

|

||||

}

|

||||

} else {

|

||||

// Unset flags.

|

||||

for i = 0; i < m; i++ {

|

||||

if j = SA[i]; n <= j {

|

||||

j -= n

|

||||

SA[i] = j

|

||||

}

|

||||

}

|

||||

}

|

||||

return name

|

||||

}

|

||||

|

||||

func induceSA_int(T []int, SA, C, B []int, n, k int) {

|

||||

var b, i, j int

|

||||

var c0, c1 int

|

||||

|

||||

// Compute SAl.

|

||||

if &C[0] == &B[0] {

|

||||

getCounts_int(T, C, n, k)

|

||||

}

|

||||

getBuckets_int(C, B, k, false) // Find starts of buckets

|

||||

j = n - 1

|

||||

c1 = int(T[j])

|

||||

b = B[c1]

|

||||

if j > 0 && int(T[j-1]) < c1 {

|

||||

SA[b] = ^j

|

||||

} else {

|

||||

SA[b] = j

|

||||

}

|

||||

b++

|

||||

for i = 0; i < n; i++ {

|

||||

j = SA[i]

|

||||

SA[i] = ^j

|

||||

if j > 0 {

|

||||

j--

|

||||

if c0 = int(T[j]); c0 != c1 {

|

||||

B[c1] = b

|

||||

c1 = c0

|

||||

b = B[c1]

|

||||

}

|

||||

if j > 0 && int(T[j-1]) < c1 {

|

||||

SA[b] = ^j

|

||||

} else {

|

||||

SA[b] = j

|

||||

}

|

||||

b++

|

||||

}

|

||||

}

|

||||

|

||||

// Compute SAs.

|

||||

if &C[0] == &B[0] {

|

||||

getCounts_int(T, C, n, k)

|

||||

}

|

||||

getBuckets_int(C, B, k, true) // Find ends of buckets

|

||||

c1 = 0

|

||||

b = B[c1]

|

||||

for i = n - 1; i >= 0; i-- {

|

||||

if j = SA[i]; j > 0 {

|

||||

j--

|

||||

if c0 = int(T[j]); c0 != c1 {

|

||||

B[c1] = b

|

||||

c1 = c0

|

||||

b = B[c1]

|

||||

}

|

||||

b--

|

||||

if (j == 0) || (int(T[j-1]) > c1) {

|

||||

SA[b] = ^j

|

||||

} else {

|

||||

SA[b] = j

|

||||

}

|

||||

} else {

|

||||

SA[i] = ^j

|

||||

}

|

||||

}

|

||||

}

|

||||

|

||||

func computeSA_int(T []int, SA []int, fs, n, k int) {

|

||||

const (

|

||||

minBucketSize = 512

|

||||

sortLMS2Limit = 0x3fffffff

|

||||

)

|

||||

|

||||

var C, B, D, RA []int

|

||||

var bo int // Offset of B relative to SA

|

||||

var b, i, j, m, p, q, name, newfs int

|

||||

var c0, c1 int

|

||||

var flags uint

|

||||

|

||||

if k <= minBucketSize {

|

||||

C = make([]int, k)

|

||||

if k <= fs {

|

||||

bo = n + fs - k

|

||||

B = SA[bo:]

|

||||

flags = 1

|

||||

} else {

|

||||

B = make([]int, k)

|

||||

flags = 3

|

||||

}

|

||||

} else if k <= fs {

|

||||

C = SA[n+fs-k:]

|

||||

if k <= fs-k {

|

||||